NAS is not for everyone

I keep seeing lots and lots of "get a NAS" videos on YouTube and most influencers are just misleading viewers by not tell them everything and not talking about the nuances. I posted about the same on Threads and had discussions with a lot of folks, but then also decided to write about it, so here we are. By the way, if you don't know:

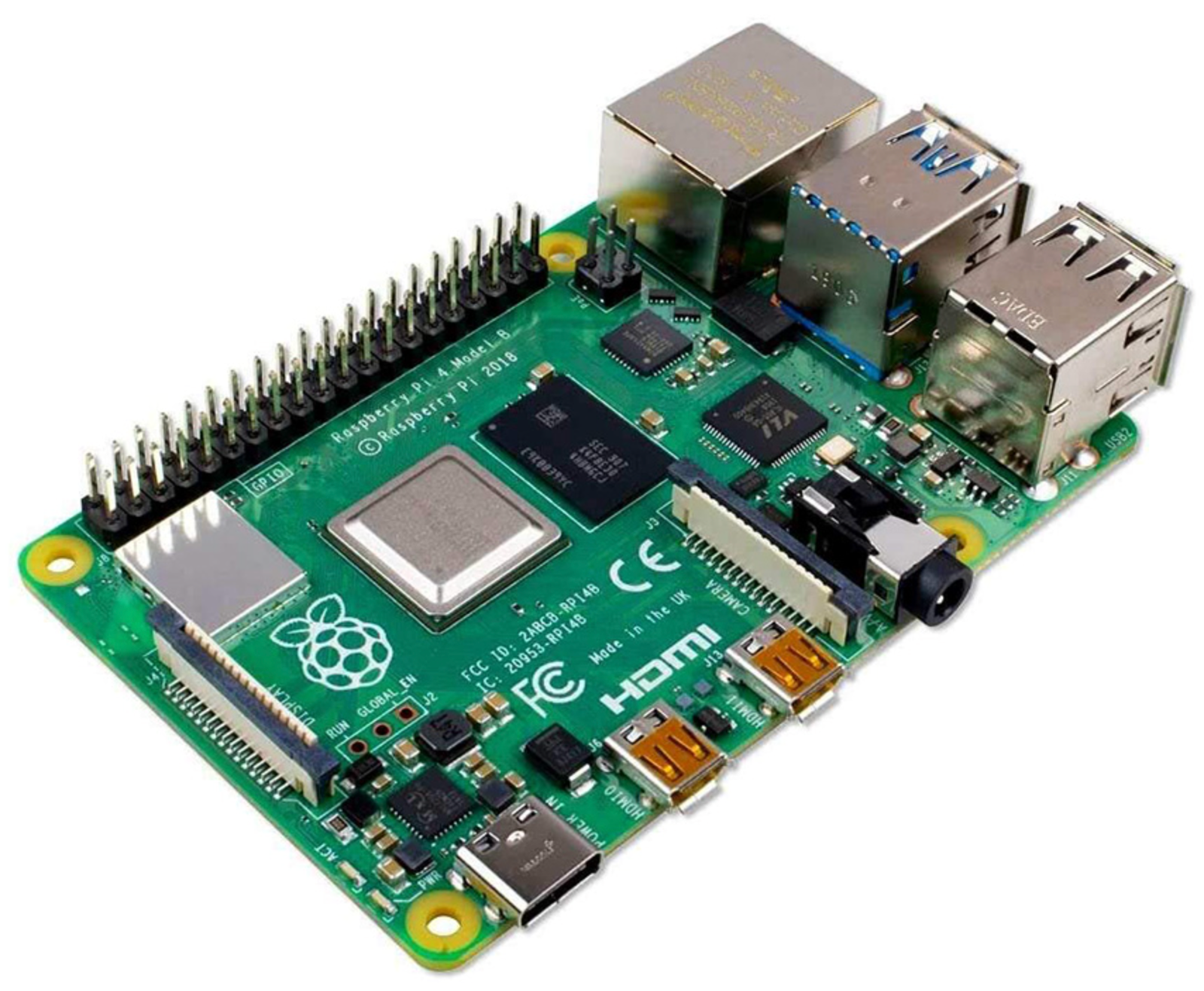

A Network Attached Storage (NAS) is a dedicated storage device connected to your local network that allows multiple users and devices to store, share, and access files from a central location.

Just to be clear here, my problem here isn't with NAS itself but with influencers misleading non-tech-savvy people and presenting it in a way as if it's the solution to all their problems. Most of the time, they only tell the half story and withhold important information because that's what helps them persuade people to buy those costly NAS devices. Because that's their goal as most of these videos are sponsored by companies like UGreen and Synology.

Some of the things that these influencers say or withheld to mislead you, are:

1. NAS is the ultimate replacement for entertainment platforms like Netflix and Prime Video.

No, it's NOT a replacement. I mean, how do you get the upcoming The Boys Season 5 to your local NAS as soon as it arrives? You can't, unless you download them illegally from somewhere. And once you watched a show or a movie, why would want to keep it, would you watch the same show again and again?

I agree, though, if you have some owned media from the past which isn't available anywhere else, you can store them and watch them.

But most people don't do this.

2. It's a complete Google Drive/Dropbox/iCloud replacement.

Yes, but with nuances.

Having everything on a NAS isn't recommended because there needs to at least another copy of the same data somewhere. You need to have a backup, and NAS is not a backup solution but a storage solution; so either you get another NAS on a different solution or choose cloud backup to solutions like BackBlaze or both (see the 3-2-1 backup rule).

But that adds to the cost, and most non-tech people don't realize this before getting one.

3. Hard Drives are prone to fail after 5-7 years.

Yes, you might have a hard drive running for 10-12 years, but that's just luck. As per multiple discussions online, most people have to replace their NAS hard-drives every 5-7 years to avoid any data loss.

And this is not talked about by YouTubers making sponsored videos about NAS.

4. SSDs are not optimized for storing data for a long time.

I have also seen some videos showcasing NAS devices with NVMe SSDs.

Yes, SSDs are fast and silent, but SSDs in general are not recommended for long-term data storage. Because they store data as electric charges that leak over time, potentially leading to data loss within 1-3 years if left disconnected.

Again, you will never see them talk about this.

I love NAS, but don't like how these YouTube influencers are misleading viewers by withholding and not telling them crucial information. And I hope this changes, eventually.

Also, I would like to give a huge shout out and say thanks to influencers who do not exploit their viewers.